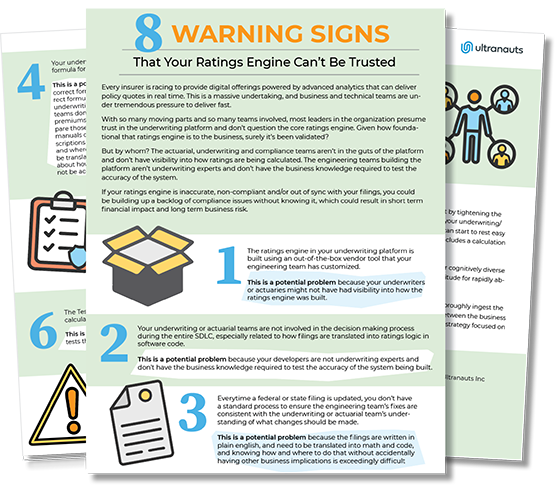

8 WARNING SIGNS That Your Ratings Engine Can’t Be Trusted

This is a massive undertaking, and business and technical teams are under tremendous pressure to deliver fast. With so many moving parts and so many teams involved, most leaders in the organization presume trust in the underwriting platform and don’t question the core ratings engine. Given how foundational that ratings engine is to the business, surely it’s been validated? But by whom? The actuarial, underwriting and compliance teams aren’t in the guts of the platform and don’t have visibility into how ratings are being calculated. The engineering teams building the platform aren’t underwriting experts and don’t have the business knowledge required to test the accuracy of the system. If your ratings engine is inaccurate, non-compliant and/or out of sync with your filings, you could be building up a backlog of compliance issues without knowing it, which could result in short term financial impact and long term business risk.

The ratings engine in your underwriting platform is built using an out-of-the-box vendor tool that your engineering team has customized.

This is a potential problem because your underwriters or actuaries might not have had visibility into how the ratings engine was built.

Your underwriting or actuarial teams are not involved in the decision making process during the entire SDLC, especially related to how filings are translated into ratings logic in software code.

This is a potential problem because your developers are not underwriting experts and don’t have the business knowledge required to test the accuracy of the system being built.

Everytime a federal or state filing is updated, you don’t have a standard process to ensure the engineering team’s fixes are consistent with the underwriting or actuarial team’s understanding of what changes should be made.

This is a potential problem because the filings are written in plain english, and need to be translated into math and code, and knowing how and where to do that without accidentally having other business implications is exceedingly difficult.

Your underwriting team is not able to articulate the formula for your premium calculations.

This is a potential problem because engineers need correct formulas to write code, and constructing a correct formula requires a thorough understanding of the underwriting rules. As additional context: underwriting teams don’t think in terms of formulas as they create premiums based on each individual risk factor and compare those to the underwriting manuals; underwriting manuals describe ratings in plain english but those descriptions leave room for interpretation in terms of how and where calculations are made; interpretations can’t be translated into code, so engineers make assumptions about how to interpret the language which may or may not be accurate.

At least some of your developers or quality engineers know enough about your underwriting rules to write a policy and understand what the expected premium should be.

This is a potential problem if developers/quality engineers aren’t familiar enough with the underwriting rules to write a policy, then they won’t be able to write a test to validate the policy.

The Test Suite for your underwriting platform (and ratings engine) does not include a calculation of premiums to compare to the output of the ratings engine.

This is important because in order to validate the logic of the ratings engine, you need tests that compare the output to the expected premium for a given policy.

When an error is detected in the ratings engine, the test that was run allows for root cause analysis.

This is a potential problem because without tests that reveal the root cause, engineering teams won’t know where to look in order to fix the issue

When an error is detected in the ratings engine, you don’t have an efficient process for the engineering team to communicate with the underwriting and actuarial teams.

This is important because the engineering team’s interpretation of the significant and cause of the error may not be the same as the underwriting or actuarial team.

If you’re concerned that your ratings engine can’t be trusted, you can start by tightening the collaboration between your development/quality engineering teams and your underwriting/ actuarial teams. If they are collaborating well but you’re still not sure, you can start to rest easy when the Test Suite for your underwriting platform (and ratings engine) includes a calculation of premiums to compare to the output of the ratings engine. If you’d like a partner who can do the heavy lifting, Ultranauts can help. Our cognitively diverse teams, 75% of whom are autistic, combine deep technical skills with an aptitude for rapidly absorbing complex domain knowledge so we can hit the ground running. We will invest the time to work closely with your underwriting team and thoroughly ingest the the underwriting rules and regulatory requirements, serve as a translator between the business and technical teams when needed, and develop a scalable test automation strategy focused on mitigating risk.

Additional Reading

Andrade, Liz. (2021). How Do You Know You Can Trust Your Ratings Engine? Ultranauts Inc

Ultranauts helps companies establish and continually improve data quality through efficient, effective data governance frameworks and other aspects of data quality management systems (DQMS), especially high impact data value audits. If you need to improve data understanding at your organization, or figure out which data assets and pipelines to test to generate the greatest business value, get in touch with us. Ultranauts can quickly help you identify opportunities for improvement that will drive value, reduce costs, and increase revenue.