The Key to Unleashing The Value of AI Systems? It’s Not More Data.

In June 2022, MIT Sloan posted an article featuring a compelling statement from Andrew Ng, the co-founder and head of Google Brain and former chief scientist at Baidu. It was:

“Machine learning pioneer Andrew Ng argues that focusing on the quality of data fueling AI systems will help unlock its full power.”

.

Recognizing that most organizations fall short when it comes to ensuring data quality, he recommended that organizations introduce Data-Centric AI, or “the discipline of systematically engineering the data needed to build a successful AI system.” He emphasized three practices that were consistently showing up as threats to solid, rigorous AI systems applied to practical problems:

- Ambiguous representation. Professionals in different fields think differently, and this manifests in inconsistent labeling for datasets that are used for supervised machine learning. Ambiguity can also extend beyond labeling, and into how problems and architectures are described and structured. A lack of transparency across departments can quickly lend itself to ambiguity and guesswork that translates to the efficacy of AI-based systems.

- The bias towards big data. A common belief is that more data is better, but statisticians and astrophysicists would disagree: just enough of the right data is better. The big data bias has led organizations to store massive amounts of historical data that no one will ever have time to examine. This “dark data” can be costly to manage, but costlier to ignore.

- Inconsistent data curation. Even if you’re lucky enough to be in an organization where data stewards know a lot about the data they manage, data curation is a skill that can take years to develop. Making the curation process more systematized, claims Ng, will help data scientists downstream use inputs in the most appropriate ways.

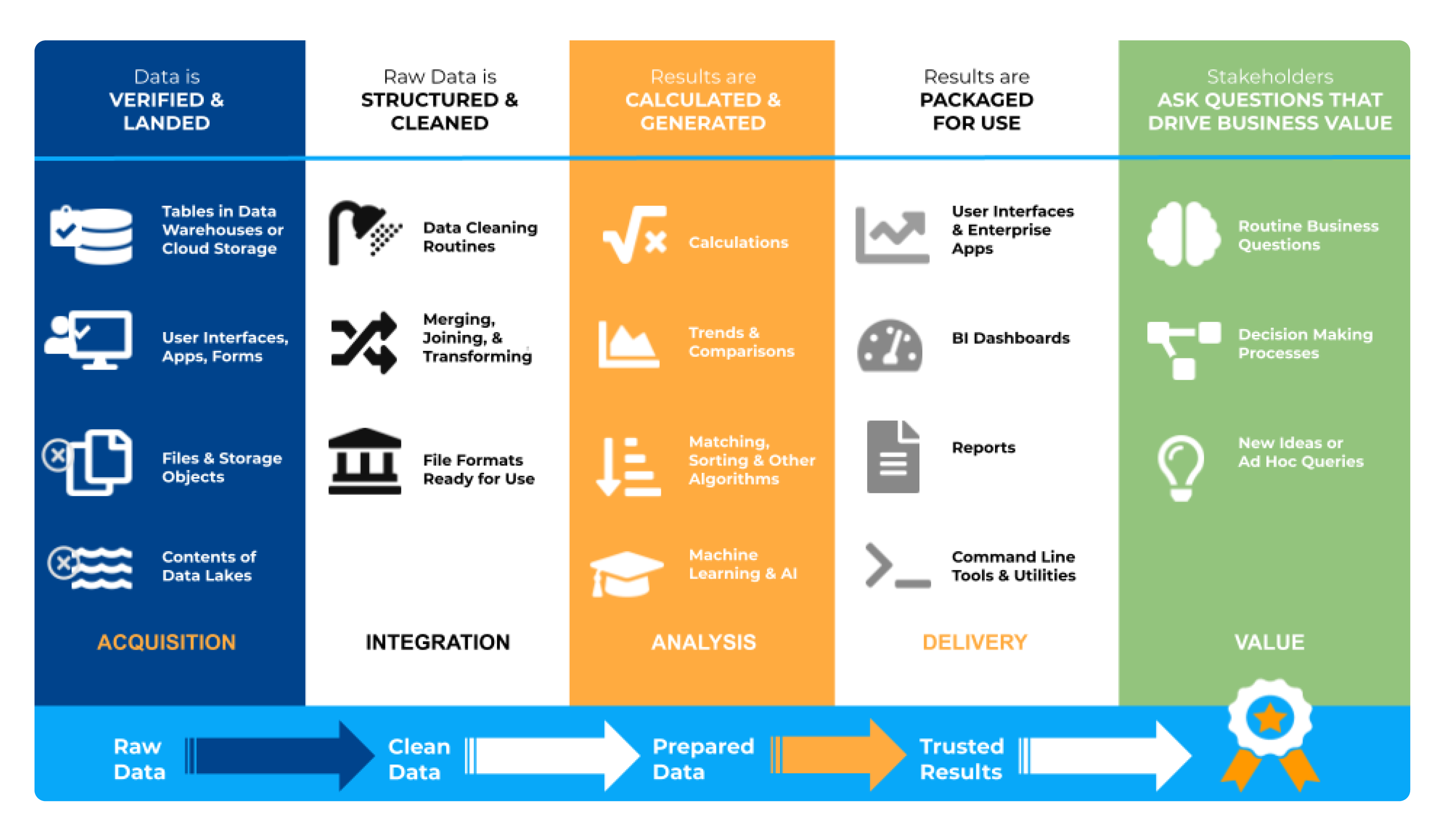

So does this mean that an entirely new way of approaching problems needs to be discovered for the true value of AI-based systems to be unleashed? Fortunately, the answer is no. Ultranauts has been working on this very problem for the past three years, identifying actionable ways to help organizations identify and remove systematic barriers to achieving high quality data and information. The key is to link data lineage to data value, which is as much an art as a science. Using this approach, it becomes easier to focus on the parts of the data management ecosystem that are driving value for the AI-based systems, and focus on improvements there.

Although many organizations consider data quality a “solved problem” - addressable by implementing a patchwork quilt of enterprise software systems for data management - just ask anyone on the business side if they trust the quality of the data they use every day. The majority of respondents will say no. That’s because for more than two decades, we’ve treated data quality as a problem to be solved close to the data, rather than a problem to be solved by examining what value the data provides in specific, meaningful use cases.

Ultranauts helps companies establish and continually improve data quality. Our teams help clients implement efficient, effective data governance frameworks and other aspects of data quality management systems (DQMS), especially high impact data value audits. If you need to improve data understanding at your organization, Ultranauts can quickly help you identify opportunities for improvement that will drive value, reduce costs, and increase revenue.

Additional Reading:

Brown, S. (2022, June 7). Why it’s time for 'data-centric artificial intelligence'. MIT Sloan, Ideas Made to Matter. Available from https://mitsloan.mit.edu/ideas-made-to-matter/why-its-time-data-centric-artificial-intelligence